Introduction

Nash equilibrium is a challenge that has acquired many increasing applications in both the internet and economics. It is evident from the internet that it is hard to count all the Nash equilibrium of a two player game. This is so even if the entry of the matrix is 0 or even 1.

Nevertheless, the complexity which is involved in finding the Nash equilibrium is open and has been actually opposed as one of the most significant wide open problems in the complexity theory today. There is a new polynomial reduction given in finding the Nash equilibrium in the general bi-matrix games in finding Nash equilibrium in the games where all the playoffs are either 1 or 0 (Kim, 2004).

Once a given problem is shown intractable in the in complexity theory, the research for the same shifts towards the directions of polynomial algorithms for approximation or modest goals and the exponential bounds which are lower for the restricted algorithm classes.

We however conclude that Nash algorithm is a concept of solution of a game that involves two or more players in it, where by assumption has been made that every player understands the strategies of the equilibrium for the players and that not even one player has a thing to gain by altering his own strategies unilaterally (Kim, 2000).

Algorithm for the Nash equilibrium

In calculating the Algorithm for Nash equilibrium, we give out a common algorithm for calculating the Nash equilibrium of the bi-matrix game within an exponential time.

The calculation relies on the proposition that; given the existence of a Nash equilibrium with the supports S1 = Supp (x) and S2 = Supp (y), there will be a polynomial time of the algorithm in order to compute a Nash equilibrium with the definite supports stated. In the question, we will calculate the Nash equilibrium as follows:

Let Si1 be the ith row of S1, and Sj2 be the jth column of S2

We then solve the linear program based on the 2n + 3 variables:

The variables: a, b ≥ 0, U, V, ᵟ

The solution is then shown to the given conditions in a Nash equilibrium having the supports (S1, S2). The set of the constraints demands that the probabilities ai be non-negative and add up to one. They should also be 0 outside the required support with at least ᵟ within the desired support (Freund, 2006).

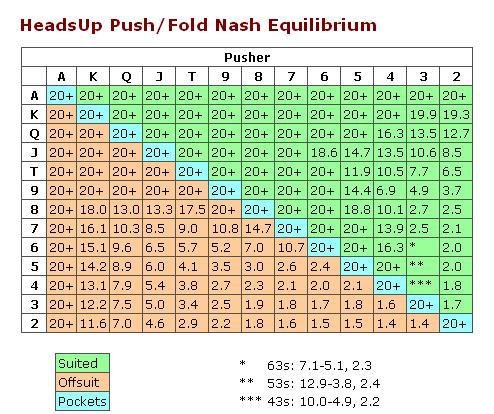

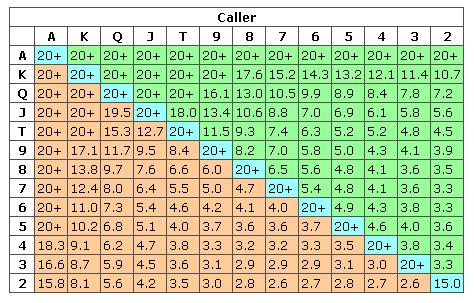

The following charts show the Nash Equilibrium tables.

The steps I used in calculating the Nash equilibrium.

- I examined the payoff matrix and determine what payoffs belong to whom

- I determined each player’s best response in all other actions of the other players, this process is done to all other players

- The Nash equilibrium hence exists where each player’s best response is similar to the other player’s best response

For instance

Step one

Step two

Step three

Proof of equilibrium

The algorithm is simple and enumerates all the pairs (S1, S2) where by S1 is the sub set of the pure strategies of the row player while S2 is the pure strategies for the column player. For every pair, the equilibrium is used to find the Nash equilibrium in case one exists with the specified supports.

In case no Nash equilibrium exists with the supports, the algorithm terminates within the polynomial time and either asserts that there is no solution existing or for one with a ᵟ = 0.

In the case latter case described, the solution to the problem will be a valid Nash equilibrium therefore the algorithm will find necessarily Nash equilibrium whenever it uses the initial algorithm on the support of the described Nash equilibrium. Hence, there exist at most the following in the solution:

The 2m × 2n kind of pairs of the sets, therefore we get a total of (n + m) 0(1) 2(m + n) total time (Kim, 2000).

Proof of negotiation algorithm

The original proof of the existence of the Nash equilibrium is the Brouwer’s fixed point theorem. The proof is as follows: we can have the best of all correspondence for all other players with the relation from the set of the probability distributions over the profile of the opponent players to the set strategies as given in the supports, the profile of the mixed strategy of all the players except for player Si1.

Analysis of negotiation algorithm

Nash algorithm is a concept of solution of a game that involves two or more players in it, where by an assumption has been made that every player understands the strategies of the equilibrium for the players and that not even one player has a thing to gain by altering his own strategies unilaterally (Freund, 2006).

Nash algorithm is a concept of solution of a game that involves two or more players in it, where by assumption has been made that every player understands the strategies of the equilibrium for the players and that not even one player has a thing to gain by altering his own strategies unilaterally.

In calculating the Algorithm for Nash equilibrium, we give out a common algorithm for calculating the Nash equilibrium of the bi-matrix game within an exponential time. The calculation relies on the proposition that; given the existence of a Nash equilibrium with the supports S1 = Supp (x) and S2 = Supp (y), there will be a polynomial time of the algorithm in order to compute a Nash equilibrium with the definite supports stated.

Autoregressive models

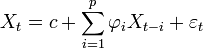

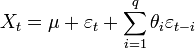

The basic structure of an autoregressive model of the order p is indicated by the notation AR (p). It is defined as

When the formulae are broken down into different sections that are used to determine the natural phenomena, its sub sections are as follows:

are the parameters of the model in use

C is the constant

Is used to define the white noise (Friedman, 2001).

For the prediction of natural phenomenon to occur using this formula, the model has to incorporate the whole autoregressive moving average model (Kim, 2000).

Autoregressive moving average model

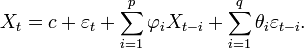

To describe a standard ARMA equation we will use the example below which further breaks down the equations used in the autoregressive models.

This model refers to a model with p autoregressive terms involved in an equation and q moving average terms in the same instance. Its combines the AR (p) and MA (q) of which the moving average model in the above equation is explained below

MA (q)

This model refers to moving average of the standard model of order q

This is broken down into the following sectors of the equation to determine the outcome of moving averages in the combined model.

- θ1,…, θq are the limits

- μ is the expectation of the time series model

- εt,εt-1 is the white noise error terms.

Example equation

The path-order AR (AR(p)) Random Process is given by

x(n) = −a(1)x(n − 1) − a(2)x(n − 2) − ・ ・ ・ − a(p)x(n − p) + w(n) (1)

where by;

- w(n) is white noise having variance σw2

- (k), k = 1,… , p are the AR parameters.

We assume that x(n) is real. The autocorrelation function of the AR process, rx(k), also satisfies the autoregressive property, this leads to the well-known Yule-Walker equations for the AR parameters

rx(k)=-

(k-i), k>- 1

Suppose the measurements used to estimate the AR parameters can be modeled as ˜x(n) = x(n) + v(n) where v(n) is white noise having variance ¾2v, then the parameter estimates derived from the Yule-Walker equations will be biased since, r˜x(k) = rx(k) + ±(k)¾2v where ±(k) is the Dirac delta function.

It has been shown that the biased AR parameters produce a “flatter” AR spectrum since the estimated poles of the AR process are biased toward the center of the unit circle [1]. A number of methods have been described for estimating the AR parameters using noisy measurements, some of these methods are surveyed in [1, 5] (Freund, 2006).

The Noise-Compensated Yule-Walker (NCYW) equations are defined as

(R˜x − ¸B) u = 0p+q (3), Where the dimensions of R˜x,B, and u are (p + q) × (p + 1) , (p + q) × (p + 1), and (p + 1) × 1, respectively. The unknowns in (3) are u and ¸, so clearly, equality holds when ¸ = ¾2v and u = · 1 a(1) a(2)… a(p) ¸T.

We observe that the first p equations are nonlinear in the AR parameters, u, and the measurement noise variance, The next q equations however are linear in u. In general there exist p distinct (¸, u) solving (3), the solution is taken to be that which corresponds to the minimum real value of ¸ solving (3).

A number of authors have observed that solving the p nonlinear equations, in addition to the q linear equations can improve the parameter estimates [2, 3, 4]. In [5], a matrix pencil method based on the Noise-Compensated Yule-Walker (NCYW) equations was presented which was found to out-perform several other methods for AR-in noise parameter estimation.

None of these papers have established the minimum number of linear equations that are required for the solution of the NCYW equations to be the unique, correct solution. It is clear that q ≥ p linear equations are sufficient to insure that the solution is unique, in this case, all other (¸, u) solving (3) are complex.

However, the minimum value of q needed to insure a unique solution has not been established. This is an important consideration because using a large value of q, which would guarantee a unique solution, also implies computing more high-order autocorrelation lag estimates which can reduce the solution accuracy since these tend to have a larger estimation variance.

Hence one would like to choose the smallest value of q that still guarantees a unique solution (Friedman, 2001).

One might expect that since there are a total of p + q equations in p + 1 unknowns, fewer then q = p linear equations are needed. In other words, if only one linear equation were used, q = 1, this would still give p + 1equations in p + 1 unknowns, hence a unique solution could still exist. In this correspondence, we show that this is not the case and q ≥ p is also a necessary condition for there to exist a unique solution (Friedman, 2001).

Conclusion

The coalition proof of the Nash equilibrium concept applies to specify non cooperative surroundings where by players can freely share and discuss their game strategies but cannot make any changes or even binding commitments. The concept emphasizes the self enforcing immunization to deviations. The best response in the game in Nash equilibrium is therefore necessary for self-enforceability.

This is not sufficient generally when players can deviate jointly in a way that is beneficial mutually. The proof Nash equilibrium refines the concept via a stronger conception of the self-enforceability that gives room for the multilateral deviations (Freund, 2006).

Nash algorithm is a concept of solution of a game that involves two or more players in it, where by assumption has been made that every player understands the strategies of the equilibrium for the players and that not even one player has a thing to gain by altering his own strategies unilaterally.

To describe a standard ARMA equation we will use the example below which further breaks down the equations used in the autoregressive models. The calculation relies on the proposition that; given the existence of a Nash equilibrium with the supports S1 = Supp (x) and S2 = Supp (y), there will be a polynomial time of the algorithm in order to compute a Nash equilibrium with the definite supports stated (Freund, 2006).

A number of authors have observed that solving the p nonlinear equations, in addition to the q linear equations can improve the parameter estimates [2, 3, 4]. In [5], a matrix pencil method based on the Noise-Compensated Yule-Walker (NCYW) equations was presented which was found to out-perform several other methods for AR-in noise parameter estimation.

None of these papers have established the minimum number of linear equations that are required for the solution of the NCYW equations to be the unique, correct solution. It is clear that q ≥ p linear equations are sufficient to insure that the solution is unique, in this case, all other (¸, u) solving (3) are complex.

In summary, Nash equilibrium is challenge that has acquired many increasing applications in both the internet and economics. It is evident from the internet that is hard to count all the Nash equilibrium of a two player game. This is so even if the entry of the matrix is 0 or even 1.

Nevertheless, the complexity involved in finding the Nash equilibrium is open and has been actually opposed as one of the most significant wide open problems in the complexity theory today. There is a new polynomial reduction given in finding the Nash equilibrium in the general bi-matrix games in finding Nash equilibrium in the games where all the playoffs are either 1 or 0.

In complexity theory, once a given problem is shown intractable, the research for the same shifts towards the directions of polynomial algorithms for approximation or modest goals, exponential bounds which are lower for the restricted algorithm classes (Friedman, 2001).

References

Freund, S. (2006). Adaptive game playing using multiplicative weights. New York: Prentice Hall.

Friedman, S.(2001). Learning and implementation on the Internet. London: Springer.

Kim, C. (2000). Fixed Point Theorems with Applications to Economics and Game Theory. London: Cambridge University Press.

Kim, C. (2004). Infinite Dimensional Analysis: London, Springer