Introduction

Calculators cannot be regarded as intelligent machines since they are not capable of working in the absence of human beings and cannot be taught. They are basically made to solve problems that people can readily solve. Regardless of speed and the complexity of mathematical problems that they can solve, all that they do is to accept some input and generate desired output.

They may be fast but that does not mean they are intelligent since they only generate what was previously programmed and fed into them. According to Levy (2010) “the aim of Artificial Intelligence is not to regurgitate what has previously been programmed by merely solving a problem. Artificial Intelligence should instead help humans come up with entirely new and different ways of solving problems”.

Unlike other disciplines that are well defined, Artificial Intelligence (AI) lacks clear details that can readily make it to be understandable. We all know how easy it is to distinguish between chemistry, physics, astronomy, biology, mathematics, languages, art, and music because all these disciplines are well defined (Russell and Norvig, 2006).

There lacks clear definition of AI that can be widely accepted, in other words AI lacks clearly defined goals. Artificial Intelligent systems should be able to learn from experience with the aim of improving themselves, in other words, intelligent machines should be those that are capable of learning.

History of Artificial Intelligence

Artificial Intelligence may seem to some to be one of the recent subjects of study given its close association with computer technology. On the contrary, AI has a long history which can be traced back to imagination, philosophy and fiction.

Disciplines such as engineering, electronics and others that were invented long ago have had their fare share of influence on AI. Historically, people are know to have applied the use of AI in areas like learning, deduction, and knowledge representation as well as translation, associative memory, theorem translation etc.

Artificial Intelligence has historically existed in the realms of theory. It all began when Homer wrote some mechanical articles about “tripods” waiting to serve the gods at dinner, thus, imaginary mechanical characters have been part of human culture from antiquity.

However, it’s only after renaissance about half a century ago that the AI scientists undertook to build machines that imitated human thought and intelligent behavior with the aim of testing whether Homers theories could be put to practice. As demonstrated in some films like the terminator, Robocop, transformers and others, prospect of machines that can mimic human beings remains in the future but discussion on the implication of such a reality should be encouraged at whatever cost (Levy, 2010).

With an earth whose population has hit a record high of over seven billion and still rising, problems facing humanity are bound to escalate. Terrorism, diseases without cure, global warming, and increasing cases of financial insecurity even among the developing countries as demonstrated by movements like we are the ninety nine percent can overwhelm human beings at some point in future.

Perhaps the invention of intelligent mechanical beings can be the only solution to social, economical and political woes that may overwhelm humanity. Like the ancient philosophers, should not the modern human being who relies on machines more than ever before stretch his imaginations once more and save humanity from a hoard of current and future problems that are all so real? Perhaps governments will some day be required to relocate people to some other planet due to overpopulation on earth.

Descartes who is a famous philosopher suggested the possibility of inventing intelligent machines to help human beings discover who they truly are. Machines can eventually help bring about actual civilization by eliminating limitations of human intelligence that is prone to bias and that can be easily corrupted.

Mechanical men designed to reason like human beings can help eliminate inefficiency in human institutions. They can be used to settle disputes in courts by using mechanical reasoning devices that use rules of logic. Prior to the invention of calculators, mathematics was a preserve for the few.

Its invention did not render the mathematicians obsolete but strengthened their skills all the more and made it possible for many more others to learn the discipline much efficiently and effectively thereby improving humanities output or productivity. In Jewish folklore, there is this artificially created being called Golem that is akin to Mary Shelly’s Frankenstein. Such imaginary characters have always fascinated human imagination. However, such characters have always fueled irrational fear of intelligent machines among people.

The first machine that seemed to emulate human thought was the chess playing machine invented in eighteenth century. Chess is a game that is intellectually demanding since it requires application of too much thought. People often believed that Turk was able to think on its own and thought that the machine could play against a human counterpart on its own.

However, unlike other people, a newspaper writer was able to observe that Turk was a machine since it played so well. It made flawless moves. Imagine a society where no professional ever made a mistake, would it not be perfect and ideal for human existence. Diplomatic rows would be solved free of human emotion and would be based on tradeoffs or win win solutions thereby making wars to be a thing of the past.

No one would ever have to lose his life because of misdiagnosis by a doctor or faulty sentence handed over by a judge to an innocent citizen. The initial studies of AI involved chess which was used for studying inference. In 1997, AI proved to be no maniac’s delusional imagination when the world chess champion, Gary Kasparov was defeated by Deep Blue Program designed to test relevance of artificial intelligence.

Buddhist views on Artificial Intelligence

Philosophy plays a major role in determining what direction is taken by the AI researchers. It is well understood that AI systems cannot be built in the absence of undivided attention from human mind given the very nature of the subject. Another thing that one ought to bear in mind is that AI is a multi-disciplinary subject that requires close consultations among stakeholders from all other disciplines.

Given that Human mind is the most intelligent thing on record, researchers naturally applies the knowledge they have gathered so far concerning its workings in building AI systems. According to Hagen (1999) “the Buddhist perspective on AI is a controversial one given the belief that the only way an individual can achieve enlightment is by suppressing every plan and calculation made by the mind “.

According to Buddhism, an enlightened individual is devoid of common intelligence that shows how the world is made of interacting parts. The individual sees the world differently, he does not plan, classify, build mental assumptions to accommodate his observations and cares little if his actions should succeed or fail.

Contrary to this belief, AI researchers’ endeavors to equip their systems with the ability to learn from past observations or with knowledge derived from day today experiences of the researchers themselves. Artificial Intelligence systems use bits or parts of different information to arrive at decisions.

This means that when designing an AI system, one cannot help but divide the world into interacting parts that can either be relevant or irrelevant depending on the decisions that are to be made by the system. All successful robots and programs are based on this premise. Despite their usefulness to human and environment, Buddhists consider this to be against the natural state of human mind (Hagen, 1999).

Buddhism teaches that all is flux and nothing abides. According to this principle there is no rule or regulation that can be applied constantly with success since change cannot be stopped from taking place by words written in any language. Any concept becomes obsolete with time. Buddhism teaches that change affects everything including our mind and ego that most people consider to be constant. This means that there can be perceptions without a perceiver or feelings without a feeler, interesting indeed (Hagen, 1999).

Buddhism teaches that everything is intimately interconnected and that reality cannot be a composition of parts. This means that everything is everything else. Thus, subdividing reality into parts will eventually provide wrong results. This concept is referred to as chaotic system.

This kind of system cannot be divided into parts with limited controllable interaction. In such a system, work input does not equal work output since a minor change in output can lead to output that is non-proportionately big and with unpredictable changes. An example of such a system is the weather. Predicting weather often involves segmenting a certain area of interest into smaller parts and then applying simplified rules to each section.

The results obtained from each section are combined and calculation of effects of each part of the other parts is done. However, it is a well known fact that not even the strongest of the super computers ever get to produce accurate weather predictions. According to Buddhism, it would be impossible to predict the weather by splitting it into parts. The only way of predicting it would be by considering it to be part of a whole.

This means that one must take into account everything that can directly or indirectly affect the weather, such as the motion of an insect, heat generated by bacteria or even the light emitted by distant galaxies which has the potential of increasing the energy of a few air molecules. According to Buddhism, an intelligent machine can only be created when it is given both the power to think and to become enlightened. Creating a mind that can be enlightened can be a real challenge for developers of AI systems.

Artificial Intelligence and Global Risk

The greatest mistake that is often made by people is to assume too quickly that they understand something. Most people profess to understand the concept of genetically modified organisms, evolution and even artificial intelligence. Advocates of AI agree that AI is known for promising so much while at the same time delivering too little.

AI is certainly not a simple subject, but people often treat it as some sort of fantasy subject that requires little or no attention. This is embarrassing indeed. Consider other disciplines like astronomy whose reputation never gets ruined by promising to create stars from hydrogen and then failing to create even a tiny one. This clearly shows that AI is not a complicated discipline but rather people tend to think they know a lot about it than they actually do.

Villains, dignified men, men of collar you name them, none if asked to press a button that would lead to total destruction of the world would ever agree. They all need a platform from where each can carry out his noble or ignoble duties. Speaking thus, the destruction of the world would definitely be more of an accident than design.

Human thought works in a way that allows it to provide accurate or approximate answers. People can easily tell what risks are capable of causing deaths more than others. However, when asked to be precise, they tend to overestimate risks that cause few deaths and underestimate those that can cause more deaths.

This shows that human thought is often faced with the risk of making errors. Once a group of people were asked by a researcher to estimate the likely causes of deaths in United States, majority of them noted down that homicide caused more deaths than diabetes. Other studies have consistently proven that human judgment cannot be free of errors.

Reports from various quarters noted that people were unwilling to buy flood insurance policies even when they were heavily subsidized. People tend to underestimate threats that can jeopardize their lives due to floods. They are simply not able to conceptualize or imagine a threat that has never taken place (Levy 2010).

People living in flood prone areas will often take comfort of levees and dams built to control floods. However, what they forget is that serious damage could occur should a single uncontrollable flood strike. If people get used to controlling minor threats, they soon become reluctant and tend to treat the occurrence of major hazards as unlikely. Talk of dangers that threaten the very existence of man and nobody will take you serious for no such danger has ever faced humanity.

Can machines use thought to assist human beings?

The search for intelligent life in outer space is nothing new. Through Search for Extra Terrestrial Intelligence program or SETI, scientists have tried without success to determine whether other animals other than man possess intelligence or whether there is possibility of intelligent beings that live in outer space. All these efforts have born no fruit. It must feel lonely indeed being the only beings who are self aware in the universe.

There are numerous fiction movies like star wars and the likes that suggest the possibility of developing artificial intelligence in machines in incredible ways. If anything, achieving such levels of intelligence in machines is still a pipe dream that is yet to be achieved. This does not mean that artificial intelligence is not in use today.

Factories use robotic arms that can handle delicate tasks. Cars are fitted with micro computers that can detect slight changes in driving style and road conditions. The problem is whether AI researchers are able to come up with intelligent machines that are capable of engaging humans in objective dialogue. To understand how this is possible, it would be proper to discuss the difference between people and computers.

Logic in man is not equivalent to computer logic which is discrete. This means that a computer cannot give you any other answer other than a single answer that it was initially programmed to give. This makes it possible to easily plot a computer’s decisions on a decision tree. Each set of discreet decisions taken is represented by a single node. This gives ability to the computer system to be able to search and understand every single one of these decisions.

On the other hand, people do not make static decisions. For instance, if a group of persons were to be asked to state whether Lake X which is 960 feet deep is deep or not they can either give a yes or no answer. This kind of logic referred to as fuzzy logic is certainly not solid. It is mostly based on an individual’s opinion. For a computer, the answer is definite. The answer given b y the computer will have been obtained from some programmed rule like, “Less than 500 feet shallow, yes, more than 500 feet, deep, and no”.

Humans are what they are today as a result of millions of years of evolution. Creating a machine with all human ability within a short period of time would definitely be a daunting task given all the stages that humans had to go through.

Intelligent machines would therefore have to undergo evolutionary processes similar to those of humans in order for them to gain from enough experience and obtain intelligence equivalent to that of humans. Measuring amount of intelligence obtained through a certain degree of evolution can be quite difficult. 60 years ago, a method to determine the level of machine intelligence in comparison to that of man was invented and given the name Turing Test.

Turing Test

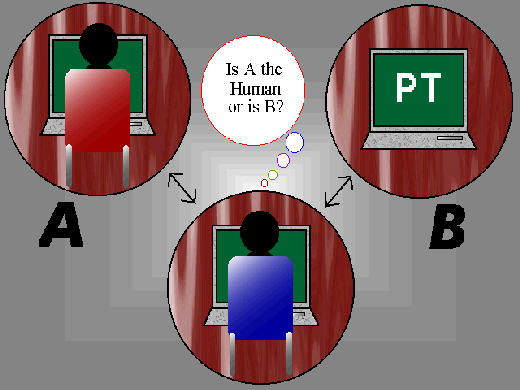

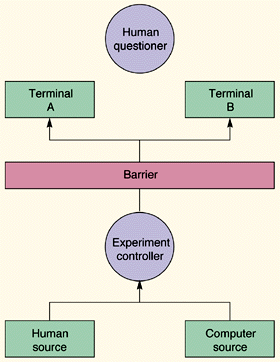

Alison MathisonTuring is a scholar who happened to be an outstanding mathematician. He wanted to establish whether it was possible for computer program to possess intelligence. He devised certain rules that would enable him accomplish his task. The rules were in form of a game commonly called the game of imitation. It involves a group of three people playing together, (A) a man, (B) a woman, and (C) an interrogator who can either be a male or female.

C is locked in a room separate from the rest. The interrogator is supposed to identify between A and B which is the machine and who is a person. C does not know the exact names of A and B since they have been labeled X and Y respectively. The interrogator is supposed to say what he or she thinks of x and Y i.e. X = A or X= B, and Y =A or Y=B. The interrogator asks A and B questions (Penrose, etal, 2009).

Turing suggested that if the responses of a computer were real enough, it would be impossible to distinguish between the real person and the computer. People have always wondered whether Turing test was designed to test the smartness of a human as opposed to machine intelligence of fooling the interrogator. Turing test has been used to develop most of the modern chat programs that tends to fool a person that they are conversing with a human.

The person conversing with a human and a machine is made to believe before the game starts that he will be playing with a man and a woman. When the game is over, he or she is asked to state what player between A and B he or she would assign machine status. If the integrator is unable to tell the difference between the machine (advanced computer) and human, then the computer is deemed to have passed intelligence test (Levy, 2010). The test normally takes five minutes.

Scholars have argued that this test cannot be used to define artificial intelligence for a number of reasons:

- A computer can imitate human behavior but not necessarily true intelligence.

- A computer can be intelligent but not able to chat like a real person.

Turing Test

Turing Test cannot articulate consciousness in a machine. Humans sometimes exhibit totally irrational, chaotic, unpredictable and unintelligent behavior. There is also some intelligent behavior that is uncharacteristic of human nature. Artificial intelligence can be faced with major setbacks indeed.

For instance would such machines be able to experience pain and pleasure, make generalizations, generate ideas, use commonsense and so on. Such questions can make the idea of trying to build artificial intelligent systems to seem absolutely ludicrous. Does this mean that efforts to create artificial intelligent systems should be abandoned, absolutely not? One cannot understand something that cannot be created.

Artificial Intelligence Case Study

Somewhere in St. Leo Laboratory, Eddie Brown is fine tuning the behavior of a machine. Scattered around him is a horde of robots some of which resemble small all-terrain motor vehicles. They appear to be kind of lifeless and slow pieces of electronic gadgets. However, these contraptions are kind of curious in that reason bout the environment and react to surrounding changes. Literary speaking, these machines can “think”.

Eddie, a senior artificial intelligence researcher compares the minds of these contraptions to those of knowledgeable insects that have learned to survive. A housefly is intelligent in that it is able to adapt to surroundings by doing those things which it can do well so as to increase its chances of survival. Eddie states that, going by that definition of intelligence, these robots are smart.

Researcher Eddie (left)

In a different Laboratory located elsewhere, Eddie collaborates with fellow researchers in the school of computing to build artificial intelligent systems that can make complicated decisions. These researchers have been charged with the task of exploring new applications.

This is a project of DARPA or defense advanced Research Projects agency. The robots designed should be able to perform two important tasks. First, they should be able to learn from the researchers how to search for biological hazards in rooms. Second, they should be able to detect, intercept and destroy a moving enemy target. The robots should be able to perform these tasks without any assistance from the researchers.

Most universities focus on building robots with low-level performance with basic system guided movements. There are some that embark on projects to build machines with a higher level of reasoning. Researchers in Georgia school of computing are doing everything to integrate the different levels of functionality to build robots with human like behavior for use by private sector and the military (Brooks, 2009).

Building machines which simulate human knowledge and awareness is no mean task. Consider the case of a common human driver on a highway or a route he or she is used to. This person will drive along at ease without being conscious of the act of driving. This is called reflexive behavior.

However, if the driver gets lost, he becomes conscious of driving as he or she tries to find the way out. This is called cognitive reasoning. The artificial intelligence researchers endeavor to make intelligent machines that can think and act as well as be able to learn and apply the required skills.

This task lies squarely within the jurisdiction of specialists who can develop high level, behavior based software for robots. This system of software borrows heavily from neuroscience and psychology. Eddie uses this set of software to control hardware. Sensors are used to enhance both the software and the hardware and help develop and sustain methods through which robots can acquire and process data that they are able to perceive in real time using data and other information from global positioning satellite.

Conclusion

Just like humans, intelligent machines in the foregoing case can be assisted to gain knowledge through the use of various techniques. The most common technique if the Learning Momentum which was discovered by a person called Arkin and his team mates. The robot is taught that if a behavior has nothing wrong with it, then it should continue repeating it.

The other technique is the reinforcement technique. This is just like the stick and carrot technique used to teach kids. Computer generated rewards are used to encourage the robot every time it makes good decisions thereby making it continue repeating the same. The foregoing project undertakes to investigate ways through which intelligent systems can get to interact with humans by instilling psychological properties in them.

Other than the project that was just discussed, researchers from the College of Computing have undertaken to develop a group of 100 miniature robots to imitate a large scale system that includes different genre of machines, humans and robots. The group of robots is expected to work together in an environment they are not used to and which is subject to change from time to time.

Sensors are allowed to be erroneous and able to gather information from different points. The sensors should be able to help the robots detect the movement and position of their counterparts. This acts as the principle means of cooperation and communication. This system is akin to that found in a colony of termites or bees.

The robots will be able to act properly after a long duration of time since they will have been able to identify wrong moves made due to sensor errors. In other words the robots will have used intelligence to solve movement and spatial problems. Hindrances to advancement in the field of artificial intelligence range from intelligence, ethical, conscience, perception, locomotion and power storage (Russell and Norvig, 2006).

References

Brooks, R. (2009). Intelligence without representation . The Case Study of Artificial Intelligence. 5 (47), 7-23

Hagen, S. (1999) Buddhism Plain & simple, New York, NY: Broadway Books.

Levy, S. (2010) Artificial Life: a Quest for a New Creation, New York, NY: Pantheon Books.

Penrose, R., Shimony, A., Cartwright, S., Alfredo, H. (2009) The Small, The Large, and The Human Mind, New York, NY: McGraw Hill.

Russell, S. and Norvig, O. (2006), Artificial Intelligence: A Modern Approach, Upper Saddle River, NJ: Pearson Prentice Hall.