Introduction

Academic search engines and bibliographic databases (ASEBDs) are regarded as the primary platform for accessing up-to-date scientific publications. Scholars often utilize ASEBDs to provide a lens via which they could perform analyses (Apuke and Iyendo, 2018; Croft, Metzler and Strohman, 2015). The rise of the Internet in the late 1990s led to the emergence of ASEBDs which replaced conventional offline systems (Dagienė and Danutė, 2016). Nonetheless, in the early 2000s, technological innovations resulted in the development of sizeable crawler-based search engines, such as Microsoft Academic and Google Scholar (Dagienė and Danutė, 2016; Fagan, 2017). Google Scholar established itself as the dominant go-to information source in academia, and it seemed incomparable based on the efficiency and adequate provision of online scholarly documents (Gusenbauer, 2019; Mingers and Meyer, 2017). Nevertheless, after halting its service, Microsoft Academic relaunched its academic search engine in 2017 to rival the former (Harzing and Alakangas, 2017b).

In addition to Microsoft Academic and Google Scholar, there are other information sources, such as Wolfram Alpha, that try to convince academicians of the validity of the information that they constitute. Unlike the former search engines, Wolfram Alpha is a computational knowledge engine that generates searches through performing computations from the Wolfram database instead of the web (Wolfram Alpha, 2020a). Even though people have access to various platforms, it is often unclear which system best suits their needs. There are different benchmarks available for assessing the quality of ASEBDs, for instance, accessibility, industry value, reliability, currency, and strengths, and weaknesses. This study concentrates on comparing the five criteria concerning Google Scholar, Microsoft Academic, and Wolfram Alpha.

Method

Searches were performed in Google Scholar, Microsoft Academic, and Wolfram Alpha to approximate a user’s approach to the same topic, “plane turbine engine”. The search results were evaluated for the information they provided regarding the turbine engine and engineering in general. For every search, the search results were examined to determine various characteristics, including accessibility, industry value, reliability, currency, strengths, and weaknesses. In addition, other searches were narrowed by date to generate sets of reasonable size, therefore, allowing for the comparison of unique items retrieved by every service system.

Google Scholar

Accessibility

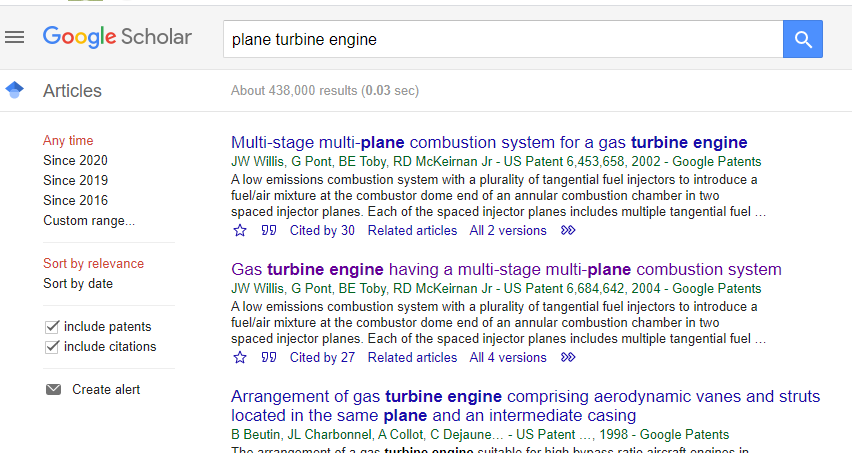

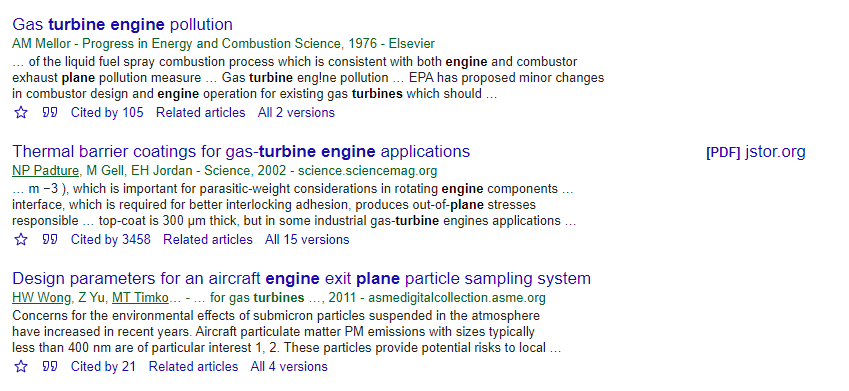

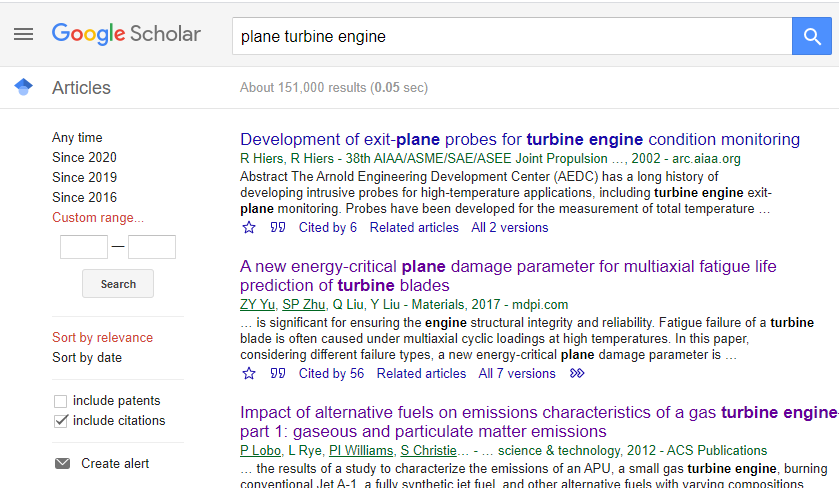

Google Scholar is a free-to-access search engine that provides end-users access to comprehensive and multidisciplinary content. The site is characterized to have a convenient and straightforward interface that facilitates smooth and quick searching, leading to its usability. The search engine contained a mixture of full-text and subscription plan articles. Although not all documents were available in full-text form, Google Scholar had a significant resource of 438,000 documents.

Industry Value

Google Scholar is an excellent engine for individual assessment as it draws from a wide array of documents, whereby some sources go beyond scientific publishing channels (Jamali and Majid, 2015; Martín-Martín, Orduna-Malea and Delgado, 2018). These types of sources have a considerable academic impact on the Web. Moreover, current articles, which are less than five years old, comprehensively covered the searched topic. For instance, on the first page, none of the sources had the keywords “plane turbine engine” highlighted in their titles. Therefore, this diminishes the relevance of the search engine. In summation, Google Scholar is relatively suitable for researching engineering disciplines (Cole et al., 2018).

Reliability

Google Scholar provides a simple and efficient method of accessing abstracts, books, peer-reviewed papers, and articles from professional societies, academic publishers’ sites, and universities. However, since the system comprises various documents that have not been peer-reviewed, its credibility as a reliable source for scholarly articles wanes (Halevi, Moed and Bar-Ilan, 2017; Harzing and Alakangas, 2016; Martín-Martín et al., 2017).

Currency

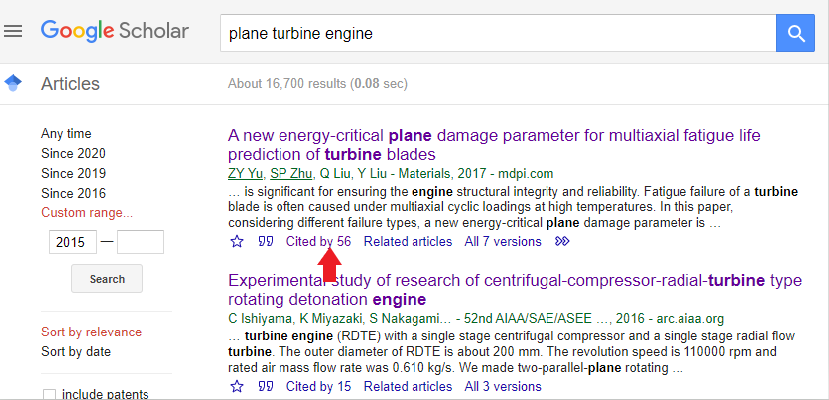

It contains documents created and published from the 1900s to the 2000s. Therefore, to facilitate the narrowing down on up-to-date information, the engine has a filter option that allows the generation of documents published within the specified date. For instance, adjusting the date ranges from 2015 – 2020 yields a total of 17,800 documents.

Strength and Weakness

One strength of Google Scholar is its simple user interface, in which the leading search engine interface comprises a query box. Furthermore, the site provides access to more grey literature since it retrieves institutional repositories, preprint archives, and conference proceedings, in addition to the journals. This is also mirrored in Bonato (2016) and Haddaway et al. (2015). Lastly, the engine offers a “cited by” feature under each source.

Although it has numerous strengths, the engine also has its flaws. The system’s weaknesses in its search interface include the absence of reliable advanced search functions, inability to search controlled vocabulary, or the lack of authority for author or journal names. Some of the unique items retrieved were off-topic. These are probably attributed to “false hits” associated with Google Scholar’s full-text searching accompanied by the lack of controlled vocabulary. For instance, the search was to locate articles on the topic of “plane turbine engine”. The search engine retrieved 438,000 documents where the searched words appeared in the full-text, although lacking in the main topic.

Microsoft Academic

Accessibility

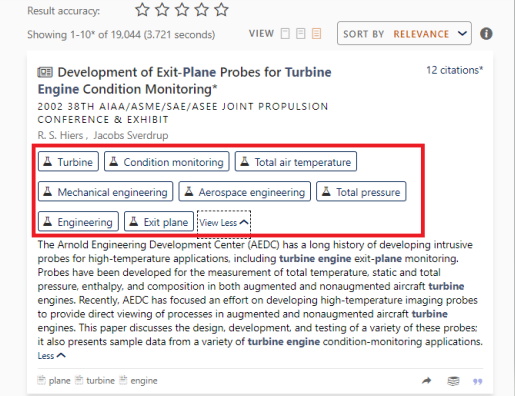

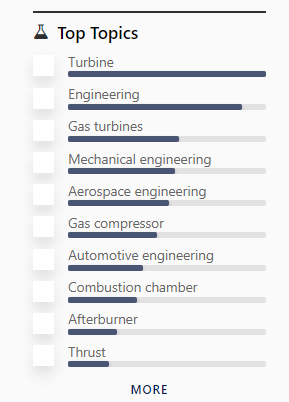

Microsoft Academic is described as a free academic search engine. Items can be filtered based on top topics, publication types, top authors, top journals, top institutions, top conferences, top MeSH descriptors, and top MeSH qualifiers. This can allow the user to access the most relevant and up-to-date information easily. Moreover, every source’s keywords are noted under every title, making it easy for the user to discern the relevance of a document without even opening its link.

Industry Value

Microsoft Academic Search proved to be a useful tool for disciplinary research for investigations performed at both the institutional and individual levels. This is because the disciplinary coverage on the topic of “plane turbine engine” and engineering, in general, was illustrated to be more balanced. Its nature is grounded on the fact that the search results gathered items from journal articles and proceeding papers (Harzing and Alakangas, 2017a). Furthermore, items can be filtered based on top topics, publication types, top authors, top journals, top institutions, top conferences, top MeSH descriptors, and top MeSH qualifiers. This can allow the user to easily access the most relevant and up-to-date information in the engineering discipline.

Reliability

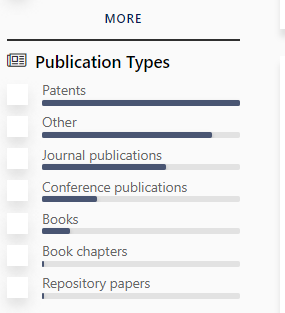

The search results yield documents from different publication types, including patents, journal articles, conference publications, books, book chapters, and repository papers. The relatively higher number of patents and journal publications provides the search system with very much needed credibility and reliability (Thelwall, 2018).

Currency

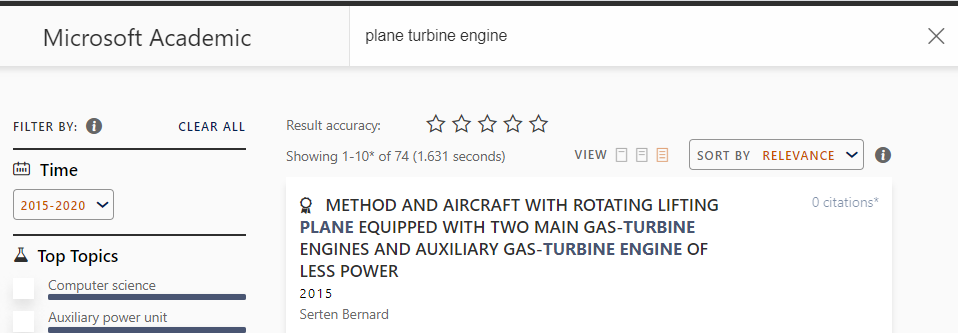

The test search generates a list of documents ranging from 1899 to 2021. As a result, the user can adjust the date limits to their desired articles. For instance, setting a time range of 2015 – 2020 generated a total of 74 items.

Strength and Weakness

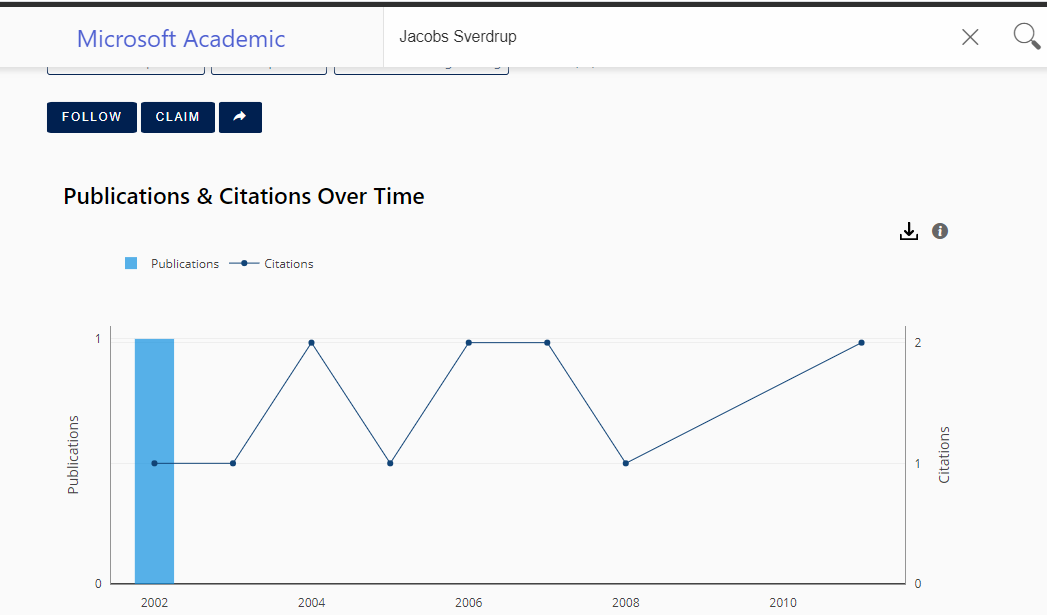

The primary strength of Microsoft Academic is that it comprises of exerts from the citations that are used when the links are clicked. Moreover, if a researcher intends to select the filter for top author as their information source, the search engine provides data regarding their field of specialization, publications, and citations. In addition, Microsoft Academic offers various sorting and filtering options to allow for the refinement of search results. Microsoft Academic is also known for its strength in allowing for retrieving aggregated citation counts and their frequency distributions, which facilitates citation analysis (Hug, Ochsner and Brändle, 2017).

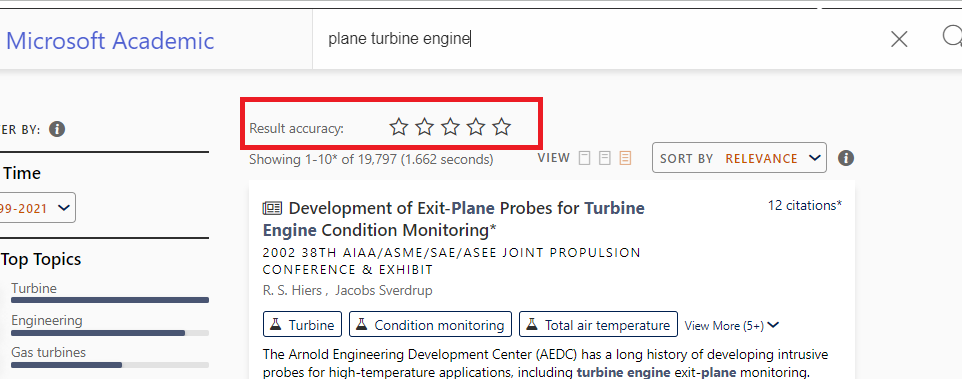

On the other hand, the search engine also has its weaknesses. For instance, it has a more complex user interface. For example, although the Result accuracy feature is present, its use in the search performance is unknown as the star ratings have not been highlighted. Therefore, its use in aiding the analysis of the search results remain anonymous.

Moreover, the complex user interface is illustrated when several steps are to be performed before a user views the full-text article. Clicking on the link in the search page directs the user to the document’s abstract. Unlike Google Scholar, whereby the full-text link button is visible, the user in Microsoft Academic has to click on the Lens button, and then on the new page, select the full-text link. Nevertheless, this is not the case for journal articles that lack a Lens button. Consequently, this variation in system features brings about complexity when using the system. Lastly, the time filtering option is relatively more advanced than the simple Google Scholar option in which the user manually types the date range. In Microsoft Academic, the user has to select the “from” date and drag and select the “to” date, which might be challenging for a new user.

Wolfram Alpha

Accessibility

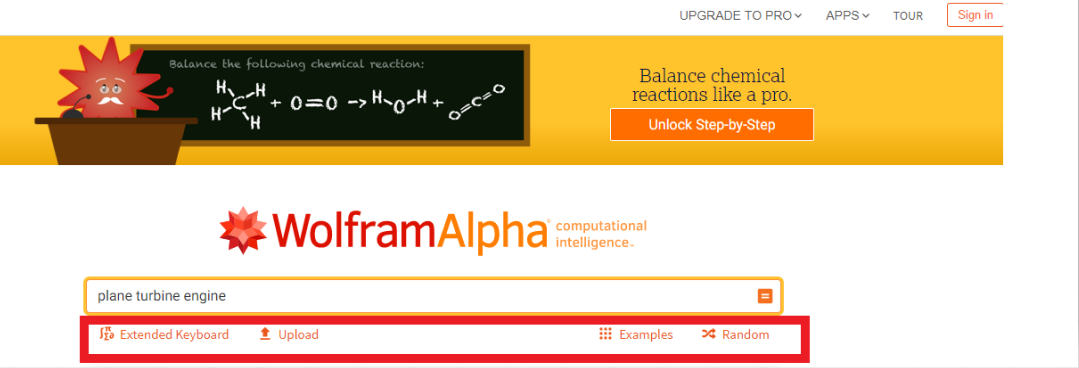

Unlike Google Scholar and Microsoft Academic, users in Wolfram Alpha can access two accounts, the free account and the Wolfram Alpha Pro. However, the Pro version contains several advantages over the former, including the ability to download data, more computation time and access to optimized web applications (Wolfram Alpha, 2020a). In addition, the pro version is further categorized into that for students and educators, with each having different functionalities. Overall, this makes it challenging for a new user to perform investigations. Lastly, it has a complex user interface as there are several buttons on the home page, which lack a filtering and sorting capability.

Industry Value

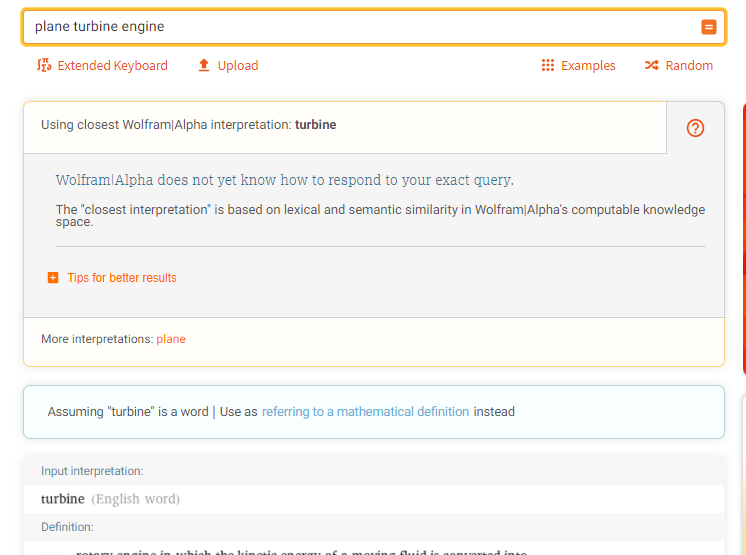

The site is limited with regards to generating sources related to the topic, plane turbine engine, and the engineering discipline in general. This is evidenced by the query message, “Wolfram/Alpha does not yet know how to respond to your exact query (Wolfram Alpha, 2020b).” Furthermore, the search only yielded the definitions of the term “turbine” and failed to consider “plane” and “engine”. Therefore, the system can be viewed as having entirely no industry value in assisting academia in performing investigations in the engineering field.

Reliability

Wolfram Alpha can be regarded to be unreliable when it comes to conducting scholarly investigations. This is because a search on the topic only generated the definition, pronunciation, first known use in English, word origin, translations, narrower terms, and internet domains, among others that were associated with only the word “turbine”. Since this information was not derived from journal articles and proceeding papers amongst other scholarly works, the information presented can be regarded as unreliable.

Currency

The currency of the search results in Wolfram Alpha cannot be established since the information source and the date that it was created has not been provided.

Strength and Weakness

Wolfram Alpha has relatively more weaknesses as compared to strengths. Its only strength is that information is categorized for students and educators; therefore, depending on the search’s intent, a user can easily retrieve relevant information. On the other hand, the system has a relatively complex user interface and unsuitable for non-computational searches when it comes to weaknesses. This is evidenced by the absence of journal articles and other scholarly works generated when a search by topic was performed. Moreover, it has a “false search” feature as the search excluded other terms, “plane” and “engine”, from the results.

Conclusion

With many individuals opting for academic web search engines for research, students and scholars must evaluate the performance of Google Scholar, Microsoft Academic, and Wolfram Alpha. This is based on accessibility, industry value, reliability, currency, and strengths, and weaknesses of such systems. Performing a direct and absolute comparison between the three sites is challenging as the engines function differently. Although difficult, there is a need to assess the quality of ASEBDs to facilitate the identification of the best engine when conducting scholarly research. Wolfram Alpha, a computational search engine, can be regarded as inferior to Google Scholar and Microsoft Academic, both academic search engines, in the scientific discipline and the academic sphere in general. In contrast, Google Scholar and Microsoft Academic are similar when evaluated, considering their strengths and weaknesses. Nevertheless, the latter has proven to be far more superior in conducting investigations concerning specific disciplines. It is necessary to note that since these systems are periodically being improved and new features added, it is vital to review them after a given period to ensure that their ranking remains relevant.

Reference List

Apuke, O. D. and Iyendo, T. O. (2018) ‘University students’ usage of the internet resources for research and learning: Forms of access and perceptions of utility’, Heliyon, 4(12), e01052.

Bonato, S. (2016) ‘Google Scholar and Scopus for finding gray literature publications’, Journal of the Medical Library Association, 104(3), pp. 252-254.

Cole, C. et al. (2018) ‘Google Scholar’s coverage of the engineering literature 10 years later’, Journal of Academic Librarianship, 44(3), pp. 419-425.

Croft, C. B., Metzler, D. and Strohman, T. (2015) Search engines: Information retrieval in practice. Boston: Pearson.

Dagienė, E. and Danutė, K. (2016) ‘How researchers manage their academic activities’, Learned Publishing, 29(3), pp. 155-163.

Fagan, J. C. (2017) An evidence-based review of academic web search engines, 2014-2016: implications for librarians’ practice and research agenda. Master’s Thesis. James Madison University. Web.

Gusenbauer, M. (2019) ‘Google Scholar to overshadow them all? Comparing the sizes of 12 academic search engines and bibliographic databases’, Scientometrics, 118, pp. 177–214.

Haddaway, N. R. et al. (2015) ‘The role of Google Scholar in evidence reviews and its applicability to grey literature searching’, PloS One, 10 (9), e0138237.

Halevi, G., Moed, H. and Bar-Ilan, J. (2017) ‘Suitability of Google Scholar as a source of scientific information and as a source of data for scientific evaluation: review of the literature’, Journal of Informetrics, 11, pp. 823-834.

Harzing, A. W. and Alakangas, S. (2016) ‘Google Scholar, Scopus and the Web of Science: A longitudinal and cross-disciplinary comparison’, Scientometrics, 106(2), pp. 787-804.

Harzing, A. W. and Alakangas, S. (2017a) ‘Microsoft Academic: Is the phoenix getting wings?’, Scientometrics, 110(1), pp. 371-383.

Harzing, A.W. and Alakangas, S. (2017b) ‘Microsoft Academic is one year old: the Phoenix is ready to leave the nest’, Scientometrics, 112, pp. 1887-1894.

Hug, S. E., Ochsner, M. and Brändle, M. P. (2017) ‘Citation analysis with Microsoft Academic’, Scientometrics, 111(1), pp. 371-378.

Jamali, H. R. and Majid, N. (2015) ‘Open access and sources of full-text articles in Google Scholar in different subject fields’, Scientometrics, 105(3), pp. 1635-1651.

Martín-Martín, A., Orduna-Malea, E. and Delgado L., E. (2018) ‘Coverage of highly-cited documents in Google Scholar, Web of Science, and Scopus: a multidisciplinary comparison’, Scientometrics, 116, 2175–2188.

Martín-Martín, A. et al. (2017) ‘Can we use Google scholar to identify highly-cited documents?’, Journal of Informetrics, 11(1), pp. 152-163.

Mingers, J. and Meyer, M. (2017) ‘Normalizing Google Scholar data for use in research evaluation’, Scientometrics, 112, pp. 1111–1121.

Thelwall, M. (2018) ‘Microsoft Academic automatic document searches: accuracy for journal articles and suitability for citation analysis’, Journal of Informetrics, 12, pp.1-9.

Wolfram Alpha. (2020a) Frequently asked questions. Web.

Wolfram Alpha. (2020b) Plane turbine engine. Web.